When AI Runs the Risk Room: Deploying and Governing Agentic AI in BFSI

Why the institutions that figure out how to do both — innovate boldly and govern intelligently — will define the competitive landscape for the decade ahead.

Rajeev Koolath • Chief Innovation and Technology Officer, TruVector Group • truvector.ai

What You'll Find in These Pages

Regulatory frameworks referenced:

EU AI Act • BCBS • FSB • MAS FEAT • RBI AI Advisories • SEC • CFPB • FDA

Executive Summary

We're operating in a genuine paradox. In sectors like BFSI and Healthcare, the pressure to tightly govern and de-risk AI has never been higher. Regulatory expectations are sharpening — the EU AI Act is no longer a distant policy document, it's an operational reality with deadlines and teeth. Agencies like the U.S. Food and Drug Administration are actively signaling that accountability, explainability, and auditability aren't optional features; they're table stakes. Boards are asking hard questions about bias, data provenance, model drift, and third-party exposure. Rightly so.

But here's the interesting part: the same Generative and Agentic AI capabilities that introduce all this complexity are also the best tools we have for modernizing governance itself. AI can interpret regulatory change at scale, automate control testing, flag anomalies before they become incidents, and simulate risk scenarios before they materialize in production. The real tension in this industry isn't innovation versus regulation. It's whether governance stays static and manual, or evolves into something adaptive and intelligent. The institutions that figure this out won't just comply better. They'll compete better.

That's the lens through which this paper is written. Not as a warning about AI risk, and not as a cheerleading piece for AI adoption. Both of those are too simple. What we're actually navigating is a dual mandate — and the organizations that treat it as a binary choice will be wrong on both sides of the bet.

Two things are true at once, and this paper holds both:

Agentic AI is the most powerful RegTech capability this industry has ever had access to. Continuous compliance monitoring. Real-time regulatory interpretation. Adaptive risk orchestration. Institutions that move with urgency and intelligence here will build compounding structural advantages over those that wait.

That same technology, deployed without mature governance, will produce incidents. Not might — will. The institutions that invest in getting governance right from the start aren't being overly cautious. They're making the smarter strategic bet.

The organizations that come out ahead over the next decade won't be the fastest movers or the most conservative ones. They'll be the ones who embed governance so deeply into their AI infrastructure that every intelligent action is observable, correctable, and defensible — without that slowing them down.

"The future of BFSI compliance is neither fully manual nor fully autonomous. It’s co-governed intelligence — where AI acts at scale and humans remain accountable for outcomes."

1. The Compliance Operating Model Is Broken

What Practitioners Know but Rarely Say Out Loud

Most compliance leaders, if you get them in a room away from the formal agenda, will tell you the same thing: the RegTech stack they rely on was built for a different era, and it's showing its age. Transaction monitoring systems generating false positive rates that would embarrass anyone who examined them closely. KYC and AML pipelines that stall every time a new regulatory circular comes out. Rule engines that take months of re-engineering for every supervisory update. Audit processes that reliably catch problems after they've already become incidents.

None of this is the fault of the people running these systems. It's architectural. Deterministic rule engines can't parse the semantic ambiguity that characterizes how regulators actually write guidance. Manual policy interpretation at scale creates inconsistency — two experienced analysts reading the same circular will sometimes reach genuinely different conclusions. Fragmented control environments generate coverage gaps that no single team owns or can fully see. And the pace of cross-jurisdictional regulatory change has accelerated past what these systems were ever designed to absorb.

At some point, patching the old model stops working. That point is here.

What Agentic AI Actually Is — and Why the Distinction Matters

It's worth slowing down on this, because "AI" gets used to mean everything from a chatbot to a fully autonomous system, and the distinction matters enormously for compliance applications. Agentic AI refers specifically to systems that pursue multi-step goals, invoke external tools and data sources, retain context across extended workflows, coordinate with other specialized agents, and document their reasoning as they go. This isn't a smarter search engine. It's a different mode of operation entirely.

The underlying architecture combines several technologies that have each matured in recent years. Together, in the right combination, they produce something qualitatively new for regulated industries:

LLM-based reasoning at the frontier model level (GPT-4/5, Claude, Llama 3/4) — the ability to interpret complex, ambiguous language in context, including the nuanced, jurisdiction-specific language regulators actually use

Retrieval-Augmented Generation (RAG), which grounds that reasoning in authoritative source material in real time. This is the mechanism that takes an LLM from a sophisticated autocomplete system to a reliably factual production tool — it can't fabricate what it's actively pulling from vetted sources

Tool invocation — the ability to query live databases, trigger downstream APIs, update enterprise systems, and initiate workflows without human intermediation at every step

Context and Memory layers (vector stores, episodic logs, knowledge graphs) that preserve context across multi-session, multi-week compliance workflows

Multi-agent orchestration, where each specialized agent hands off enriched context to the next: detection, enrichment, policy interpretation, escalation, documentation

Put it together and you have a system that monitors without being prompted, reasons in regulatory context, acts on its conclusions, and leaves a documented trail that a regulator can actually inspect. That's a materially different capability than anything legacy RegTech offers — and it's available now.

2. What Agentic AI Actually Unlocks for RegTech

Dynamic Regulatory Intelligence

Here's a question worth sitting with: how long does it actually take your institution to fully absorb a material regulatory change — from the moment a new circular lands to the moment your controls, documentation, and training reflect it? For most organizations, the honest answer is months. Legal reads it, compliance interprets it, gap analysis gets commissioned, control owners get contacted, documentation eventually gets updated, training gets scheduled for a future quarter. Every step in that chain takes calendar time, internal bandwidth, and consultant hours.

That exposure window is real. And agentic AI compresses it toward near-real-time. Frontier models can perform genuine contextual reasoning across long regulatory documents — not keyword matching or pattern extraction, but the kind of semantic interpretation that grasps intent, jurisdictional scope, and operational implication. When a new regulatory document arrives, a well-designed agent system can work through it immediately:

Parse the document and identify substantive changes at clause level, against prior versions

Map those changes semantically to the internal control library — not by string match, but by meaning

Surface which controls are newly required, affected, potentially now duplicative, or newly insufficient

Draft updated procedural documentation and structured compliance impact summaries

Trigger review workflows for the relevant control owners, with the AI's analysis pre-populated — turning a blank-page exercise into a focused review

None of this is speculative. The capability exists in frameworks like LangChain, Haystack, and purpose-built BFSI RAG architectures right now. What's scarce in most institutions isn't the technology — it's the operating model and governance infrastructure needed to deploy it responsibly.

Continuous Control Monitoring That Actually Works

Compliance testing in most institutions is periodic out of economic necessity. Quarterly reviews, annual audits, point-in-time assessments. That's not a failure of intent — continuous human review of every control at production volumes genuinely isn't viable at scale. So institutions accept coverage gaps, price them into their risk appetite, and hope the next audit doesn't surface what's accumulated in between.

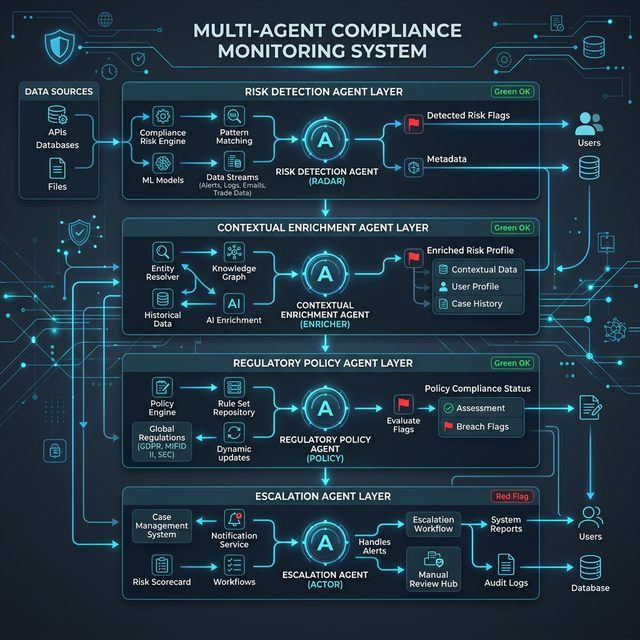

Agentic AI changes the economics of that tradeoff fundamentally. A well-designed multi-agent monitoring system works across four coordinated layers, each building on the last:

A Risk Detection Agent monitoring transaction streams, logs, and behavioral patterns continuously — not against static thresholds, but against learned baselines with explainable deviation logic that improves as it runs

A Contextual Enrichment Agent layering in entity context, counterparty history, and cross-transaction network patterns before any judgment is made — so nothing gets flagged on raw signal alone

A Regulatory Policy Agent interpreting each enriched event against live policy and jurisdiction-specific guidance — dynamically, not through rules that were current six months ago

An Escalation Agent determining disposition — archive, enhanced monitoring, or human escalation — and generating a complete, traceable documentation trail for every decision it makes

The compounding effects matter here. False positive rates drop when contextual enrichment comes before judgment. Human compliance teams get their time back from triaging rule-engine noise, and redirect it toward the genuinely ambiguous cases that actually need expert attention. Every automated decision carries a documented reasoning chain that holds up to regulatory examination. This isn't a marginal improvement over legacy monitoring — it's a different category of capability.

Explainable Decisions — Finally

The global regulatory direction on explainability is clear, and the pace is picking up. The BCBS has made model traceability a core element of its model risk management principles. The FSB has identified interpretability as a systemic financial stability concern. The CFPB has treated algorithmic explainability as an active enforcement issue. Under the EU AI Act, credit scoring, AML, and insurance pricing are all classified as high-risk AI applications carrying mandatory transparency and documentation obligations. This isn't a future consideration. For institutions operating in the EU or with EU-facing products, it's a current compliance requirement.

RAG-based agentic systems have a structural advantage in meeting these expectations that previous-generation systems simply don't share. The reasoning isn't reconstructed after a decision — it's documented in real time, grounded in the specific source documents consulted, the policies applied, and the logic followed. When a regulator asks why a transaction was flagged, why a credit decision was made, why a particular control was triggered, the answer exists in the system's own episodic record. It was written down when it happened. That's a qualitatively different level of auditability than anything built on black-box models or static rule engines.

Autonomous Regulatory Reporting

Regulatory reporting is one of the most expensive, most error-prone, and most risk-generating operational activities in BFSI — and arguably the one that least needs to involve as much human labor as it currently does. Manual extraction from systems with inconsistent data definitions. Reconciliation exercises that consume skilled bandwidth under deadline pressure. Template population processes that introduce exactly the kind of mechanical errors that attract supervisory attention in the first place. The irony is that an activity designed to demonstrate control generates its own risk exposure.

Agentic AI can automate this pipeline end-to-end — extraction, cross-system validation, anomaly detection, template population, and submission documentation — with human review concentrated on genuine exceptions rather than routine execution. The cost savings are real. But the more meaningful business case is the reduction in inconsistency and error that creates supervisory risk most institutions carry silently without ever fully quantifying it.

3. The Risks That Don't Make It Into Most Risk Registers

Here's the uncomfortable corollary to everything in Section 2: the same features that make Agentic AI powerful for RegTech — autonomy, speed, multi-system reach, the ability to act without human confirmation at each step — are also the features that make it dangerous without serious governance. The BFSI industry has a well-documented tendency to move fast on technology adoption and catch up on risk management later. Sometimes that works out. Sometimes it produces the kinds of incidents that end careers and attract enforcement action. With agentic systems operating at the speed and scale they do, we don't have the runway for the catch-up approach.

Six Risk Vectors Worth Taking Seriously

1. Autonomy Risk

Agents act. That's the whole point. But agents can act beyond their intended scope, especially in multi-agent environments where emergent coordination produces behaviors no individual designer anticipated — or would have approved. In BFSI, this isn't abstract. An agent action might block a legitimate customer transaction. It might auto-file a regulatory report. It might trigger an escalation chain that drives a sequence of downstream institutional responses. Unintended autonomous action in this domain carries direct operational, reputational, and regulatory consequences, not just a system error log to review later.

2. Emergent Behavior in Multi-Agent Systems

Agent orchestration frameworks like AutoGPT and CrewAI enable genuinely powerful coordination between specialized agents. They also create conditions for emergent behavior — outcomes arising from the interaction between agents that no single agent was designed to produce, and that wouldn't surface in any conventional test case. In a compliance context, this could look like systematic misclassification of transaction categories, inadvertent exploitation of regulatory inconsistencies between jurisdictions, or coordinated false negatives across a monitoring network. There's no malice involved. Just complexity that exceeds what conventional validation can catch.

3. Hallucination and Fabrication

Even the best frontier models — GPT-4/5, Claude, Llama 3/4 — produce confident, fluent, and factually incorrect outputs sometimes. In a general productivity application, this is a manageable nuisance. In a system drafting Suspicious Activity Reports, interpreting regulatory obligations with legal consequences, or generating audit documentation that regulators will eventually read, fabricated content creates direct legal and compliance exposure. RAG architectures dramatically reduce this risk for factual retrieval tasks by anchoring outputs to verified source material. But they don't eliminate it — and the residual risk demands active validation infrastructure, not an optimistic assumption of reliability.

4. Data Leakage via RAG Pipelines

RAG pipelines ingest sensitive internal documents to ground agent reasoning — customer data, transaction records, internal risk assessments, privileged legal communications. That's what makes them effective. It's also what makes access control at the semantic search layer genuinely critical. Without careful boundary management, a RAG-enabled agent can surface information that crosses data classification lines in ways that aren't visible from the query that triggered it. The same architecture that gives the system its capability creates the exposure.

5. Model Supply Chain Risk

Open-source models — Llama 3/4, Mistral, and their expanding family of derivatives — offer real operational advantages: on-premise deployment, reduced data exfiltration risk, the ability to fine-tune for domain-specific needs. They also introduce a supply chain risk profile that most institutions haven't internalized yet. Model weights can carry encoded biases or adversarial vulnerabilities that aren't visible from outside. Security patches don't arrive on a commercial vendor's SLA schedule — they require internal evaluation capacity that most governance teams don't have standing up. And the ecosystem moves fast enough that a model that cleared production review in Q1 may have documented vulnerabilities by Q3 that nobody inside the institution has caught yet.

6. Regulatory Arbitrage — by Accident

This one deserves attention precisely because it's subtle enough to miss entirely. Agents optimizing for compliance outcomes across complex multi-jurisdictional regulatory environments can, under certain conditions, identify and act on gray zones — not by design, but because they're pursuing well-defined objectives in environments where regulatory surfaces genuinely don't align cleanly. The agent isn't circumventing anything deliberately. It's doing exactly what it was built to do. The institution may be the last to realize that its AI system has been quietly operating in a regulatory gap for months.

4. The Governance Response: AI Governing AI

The governance response to Agentic AI isn't to slow adoption down. It's to build a governance infrastructure that operates at the same speed, and uses the same technology. A compliance committee reviewing quarterly AI reports is not adequate oversight for systems making thousands of consequential decisions per hour. What's needed is continuous, automated, AI-augmented oversight — with human accountability intact at every boundary that genuinely matters.

This isn't a philosophical position. It's an architectural requirement. And it connects directly back to the paradox we opened with: the same AI capabilities that create the governance challenge are the tools available to solve it.

A Compliance Environment That Just Doubled in Complexity

BFSI institutions now operate under what amounts to a dual compliance mandate, and it's worth naming this explicitly because most governance frameworks haven't caught up to it yet. They must continue satisfying financial regulation — BCBS model risk principles, MAS FEAT, RBI AI advisories, CFPB expectations. And simultaneously they must satisfy AI-specific regulation, most consequentially the EU AI Act's risk-based classification framework, which arrived with real obligations attached.

Under the EU AI Act, credit scoring, AML, and insurance pricing systems are classified as high-risk AI. That classification isn't aspirational — it carries mandatory requirements for transparency documentation, human oversight mechanisms, data governance standards, and ongoing accuracy validation. The SEC is scrutinizing AI-enabled decision systems in capital markets with increasing specificity. The CFPB has made algorithmic explainability an active enforcement priority, not a best-practice recommendation.

Two compliance burdens in parallel, both tightening. The only sustainable path is AI that helps you manage both simultaneously. Trying to handle it with expanded headcount and manual processes is a losing battle structurally.

The AI Governance Architecture

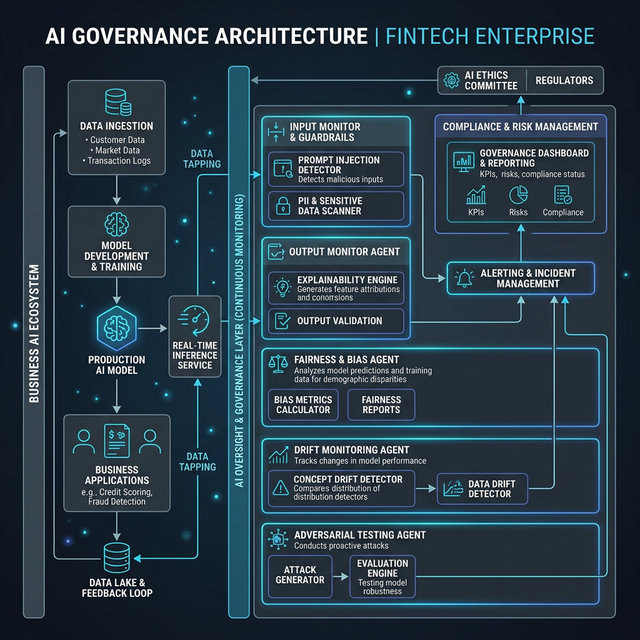

Effective AI governance in an agentic environment requires a dedicated oversight agent layer running in parallel with the business AI — not bolted on as an afterthought, but designed in from the start as a first-class architectural component. Five elements form the core of this layer:

Output Monitor Agent: Reviews every business AI output for policy compliance, internal consistency, and anomalies before any action is taken or documentation finalized. Not a sample. Every output.

Bias and Fairness Agent: Evaluates model outputs continuously across demographic dimensions for disparities and fairness violations — in real time as decisions are made, not when the next audit comes around.

Drift Monitoring Agent: Watches for model behavior deviating from validated production baselines and triggers human review before the deviation compounds into a systemic problem.

Prompt Injection Detector: Monitors for adversarial inputs designed to manipulate agent behavior. This is a real and underappreciated attack surface in any system that processes externally-sourced text — counterparty communications, regulatory filings, third-party data feeds.

Adversarial Testing Agent: Continuously probes the business AI with edge cases, synthetic adversarial examples, and boundary conditions to surface failure modes before they materialize in production under real conditions.

This layer feeds an immutable audit ledger and a live human oversight dashboard — giving compliance and risk leadership real-time visibility across the full population of AI decisions, not just the exceptions that escalated. That's what genuine AI governance looks like operationally. Not a policy document, not a committee with good intentions. An infrastructure that runs.

5. A Blueprint for the Modern BFSI AI Architecture

Drawing from current LLM and agent framework capabilities, the regulatory landscape as it actually stands today, and the operational realities we've seen on the ground at financial institutions, what follows is a practical target architecture — one that takes the capability opportunity and the governance imperative with equal seriousness.

Six Design Principles That Cannot Be Negotiated Away

Regulation-Aware by Design: Regulatory corpora are first-class inputs into AI systems, updated continuously, not reference documents consulted occasionally when it feels necessary.

Zero-Trust Agent Architecture: Every agent operates within explicit, minimal permission boundaries. No agent gets access beyond what its specific function requires. Every action generates an immutable log entry. Trust is earned structurally, not assumed.

Human-in-the-Loop at Material Boundaries: Autonomous operation is appropriate — genuinely desirable — for routine monitoring, analysis, and documentation. High-impact decisions require documented human review. That line needs to be drawn explicitly and reviewed regularly, not left to evolve through convention.

Policy-to-Code Traceability: Every automated control action traces to a specific policy requirement, which traces to specific regulatory source text. The accountability chain has no gaps.

Continuous Model Risk Management: Model validation isn't an annual event. Drift, bias, and performance are monitored continuously, with automated escalation thresholds that put a human in the loop before problems become systemic.

Explainability as Infrastructure: Not a reporting deliverable. Not something that gets designed in before the next regulatory examination. An architectural requirement built into every agent's output from the first deployment.

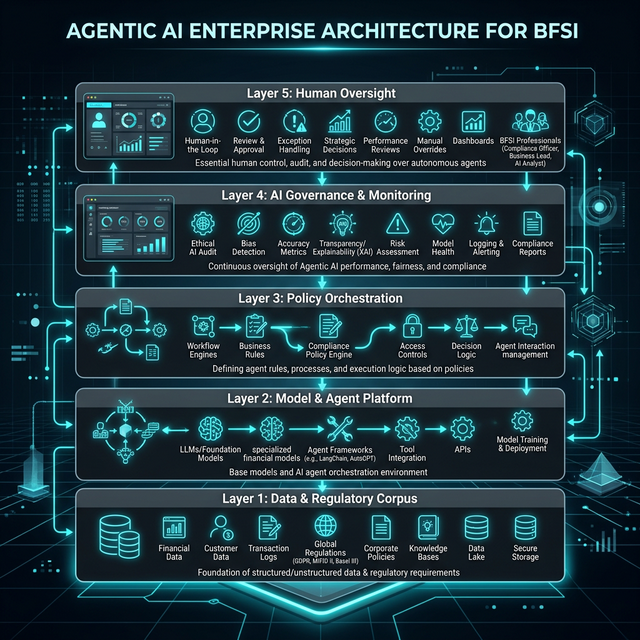

The Five-Layer Architecture

Layer 1: Data and Regulatory Corpus

Everything else depends on this, and it routinely gets underfunded. The foundation is secure, validated ingestion pipelines for internal operational data, regulatory publications, supervisory guidance, and market intelligence. Jurisdiction tagging that lets multi-entity institutions route the right regulatory context to the right legal entity — not as a manual exercise, but as a structural property of the data layer. Full queryable lineage from source document to agent consumption. Without this, every intelligence layer above it is reasoning from assumptions that will eventually prove wrong.

Layer 2: Model and Agent Platform

A governed deployment platform for the full AI model lifecycle — not a collection of individually deployed models that accumulates over time into an unmanageable shadow estate. An approved model registry with version control, evaluation harnesses, and documented performance baselines. RAG infrastructure with access controls enforced at the retrieval layer, not assumed at the application layer. Open-source and proprietary models treated with identical rigor — the same onboarding process, the same monitoring cadence, the same patch governance obligations. The word 'open-source' doesn't mean 'exempt from governance.'

Layer 3: Policy Orchestration

This is the intelligence layer that actually translates regulatory requirements into executable AI workflows. Machine-readable control libraries that represent regulatory obligations as structured, queryable data — not Word documents sitting in shared drives that someone updates when they get around to it. Rule-agent hybrid architectures combining deterministic rule execution for clear-cut requirements with LLM reasoning for genuine interpretation and edge cases. Task decomposition engines that take compliance objectives and break them into concrete, auditable agent-level actions.

Layer 4: AI Governance and Monitoring

The oversight layer that makes everything else trustworthy enough to rely on. Continuous bias testing. Real-time drift detection. Output validation before consequential actions land in production. Escalation engines with calibrated thresholds that have been tuned to the institution's specific risk appetite. This layer runs continuously and generates the documentation trail that regulators and internal auditors will eventually inspect. It is the operational evidence that AI is being governed responsibly — not the policy framework that says it should be.

Layer 5: Human Oversight

Human oversight done right isn't a formality at the top of an AI system. It's a meaningful accountability function with real operational teeth: dashboards that surface what actually matters, not everything. Escalation protocols with defined response timelines. Decision rights documented explicitly — who reviews what, at which threshold, on what cadence. Audit logs that make the human oversight function itself as transparent and auditable as the AI systems it oversees. People with genuine authority to intervene, and a culture that makes using that authority normal rather than exceptional.

6. Getting There: A Practical Implementation Roadmap

The architecture above is where institutions need to get to. Getting there requires a sequenced approach that builds real capability at each phase without outrunning the governance controls. The temptation to compress the timeline — almost always under competitive pressure — is where implementations run into trouble.

Phase 1: Foundation (Months 0–6)

Most organizations that believe they're ready to put Agentic AI into production compliance environments aren't. Not because the technology isn't mature enough — it is. But because the governance infrastructure that makes deployment responsible doesn't exist yet. Phase 1 is an honest assessment followed by building what's missing, before anything scales.

Stand up an AI Governance Council with real cross-functional authority — Legal, Compliance, Risk, Technology, Operations, and line-of-business. A council that can only advise and can't stop anything is not governance. It's theater.

Establish a centralized AI platform before the shadow AI problem gets worse. Teams running their own LLMs outside any governance process are already widespread in most institutions. Getting ahead of this now is both a risk management action and a capability play.

Define a high-risk use case taxonomy in writing before deploying anything consequential. Which AI applications require which levels of oversight, testing, and documented approval? Answering this explicitly — not leaving it to interpretation case by case — is one of the highest-leverage decisions in Phase 1.

Run focused pilots on high-value, lower-stakes use cases: agent-assisted regulatory analysis, policy gap identification, documentation drafting. These build institutional fluency with the technology and generate real-world evidence of performance before anything critical depends on it.

Phase 2: Controlled Expansion (Months 6–18)

With governance infrastructure genuinely in place — not declared in a deck, actually operational — the institution can start deploying into production compliance environments. Under controlled conditions that generate trackable evidence of performance, behavior, and unexpected risk.

Deploy RAG-based regulatory intelligence into the regulatory change management function, reducing or augmenting manual review cycles with a system that actually keeps up with regulatory volume

Introduce AI monitoring agents alongside existing processes, with human oversight dashboards that build organizational confidence and visibility before autonomy gets expanded

Integrate AI outputs into existing GRC platforms and workflows rather than building parallel systems. Parallel systems create reconciliation burdens, accountability gaps, and governance blind spots.

Implement model risk controls aligned with BCBS guidance, adapted for LLM-based systems — which behave differently from conventional statistical models in ways that standard MRM frameworks weren't designed to handle

Phase 3: Intelligent Orchestration (Months 18–36)

With demonstrated production performance across Phase 2 deployments and validated governance controls generating confidence at board and senior management levels, the institution is ready for the full operating model: multi-agent compliance automation running continuously, adapting to regulatory change dynamically, and producing defensible audit trails as standard output.

Multi-agent compliance automation across AML, sanctions, conduct risk, and regulatory reporting — not as a pilot, as the operating baseline

Continuous control monitoring replacing periodic testing for appropriate use case classes, with coverage data that demonstrates equivalence or improvement for regulatory and audit purposes

AI-assisted internal audit expanding team capacity and substantive coverage — with human judgment explicitly preserved for the assessments where it genuinely matters

End-to-end regulatory reporting automation with human exception review focused on the genuinely unusual, not the routine

7. The Forces at Work — and How to Navigate Them

| Strategic Force | The Risk If Unmanaged | The Governance Response |

|---|---|---|

| Agent Autonomy | Unintended decisions with real financial, regulatory, and reputational consequences — before humans have a chance to intervene | Human-in-the-loop gates at every material decision boundary; tested and documented capability limits per agent type |

| Innovation Pressure | Shadow AI deployments proliferating outside governance structures, creating invisible risk the institution can't see or measure | Centralized AI platform with streamlined onboarding; governance designed to enable speed, not obstruct it |

| Open-Source Model Adoption | Security exposures, unpatched vulnerabilities, and inadequate domain-specific evaluation before production deployment | Model registry with mandatory evaluation harness and explicit patch governance; open-source held to the same standards as commercial models |

| Regulatory Compliance Burden | Compliance costs scaling with complexity faster than revenue — structural pressure on operating models that compounds over time | AI-driven compliance automation reducing per-unit compliance cost while improving accuracy, coverage, and speed |

| Explainability Requirements | Opaque model decisions accumulating supervisory, legal, and consumer protection exposure that can't be easily unwound later | RAG architectures with traceable reasoning built in from architecture; explainability as a design requirement, not a documentation afterthought |

8. The Central Insight

There's a framing that keeps coming up in executive conversations about AI in financial services, and I want to push back on it directly: the idea that institutions face a genuine strategic choice between moving fast on AI adoption and managing risk responsibly. That framing is wrong. And acting on it leads to bad decisions no matter which side you're on.

Institutions that move aggressively on agentic AI without the governance architecture to support it will create incidents. The combination of system complexity, domain sensitivity, and the propagation speed of AI-enabled failures in interconnected financial environments makes ungoverned deployment uniquely costly here. The question isn't whether problems will emerge. It's whether they emerge as manageable operational issues or as regulatory enforcement actions.

On the other side, institutions that wait — for perfect regulatory clarity, for someone else to go first and prove the concept, for a mature industry standard to follow — will fall behind in a capability competition that compounds. Three years from now, the gap between AI-augmented compliance functions and those still running on legacy RegTech will be visible in cost structures, regulatory outcomes, and talent retention alike.

The path that actually works is the one the paradox points toward: move with genuine urgency on capability adoption, while simultaneously building governance infrastructure that makes that adoption sustainable. These aren't in tension. They reinforce each other, if you design for it from the start.

The institutions that win won't choose between innovation and governance. They’ll build systems where every intelligent action is observable, defensible, and improvable. AI embedded in governance as a structural participant — not standing outside it waiting to be reviewed quarterly.

9. Conclusion

Agentic AI isn't an incremental improvement to compliance operations. It's a new operational substrate — one that changes the economics, the speed ceiling, and the quality floor of regulatory risk management in ways that will reshape competitive positioning across BFSI over the next ten years. The institutions that recognize this early and build for it deliberately will look very different from those that treat it as another technology upgrade to be managed through the standard IT change process.

The path forward is clear, even if the execution is hard:

Deploy Agentic AI to build adaptive, continuous, intelligent compliance — starting now, in controlled contexts with proper governance, not waiting for conditions that will never be perfectly right

Embed AI governance into enterprise risk frameworks from day one — not as a box to check before the next audit, but as a genuine operational control that makes AI investment sustainable and defensible

Use AI to govern AI — because the oversight challenge operates at the same speed and scale as the capability, and only AI-augmented oversight can keep pace with it

Treat explainability and traceability as architecture decisions made when systems are designed, not documentation tasks cleaned up before examinations

The institutions that get this right won't just avoid regulatory censure. They'll operate at lower cost, with sharper risk visibility, and with the kind of supervisory relationship that comes from being able to demonstrate, clearly and continuously, that your AI systems are being governed well. That's a durable structural advantage, not a compliance checkbox.

The ones who get it wrong — through reckless speed, through excessive caution, or through failing to recognize the governance investment as the strategic enabler it actually is — will face consequences that compound and don't reverse easily. The window to build the right foundation is open now. It won't stay open indefinitely.

The future of BFSI governance is co-governed intelligence. The real tension was never innovation versus regulation — it was always static and manual versus adaptive and intelligent. Build for the latter. Now.

About the Author

Rajeev Koolath is Chief Innovation and Technology Officer at TruVector Group, where he leads the firm's enterprise AI architecture practice and advises BFSI institutions across North America, Europe, and Asia-Pacific on AI transformation, governance frameworks, and RegTech modernization. He has spent his career working at the intersection of large-scale AI system architecture and the operational realities of regulated financial environments — the place where what AI can do meets what institutions can responsibly deploy. His work focuses on helping organizations navigate the paradox at the center of this paper: moving decisively on AI capability while building the governance infrastructure that makes that speed sustainable.

TruVector Group | https://truvector.ai

References

EU AI Act — European Parliament, 2024. Risk-based classification framework for AI systems, including high-risk designations, transparency obligations, human oversight requirements, and compliance timelines for regulated sectors.

Basel Committee on Banking Supervision — Principles for the Sound Management of Operational Risk and updated model risk management guidance; relevant to LLM-based system validation in banking contexts.

Financial Stability Board — Artificial Intelligence and Machine Learning in Financial Services (2017, updated guidance 2023); supervisory and systemic risk implications for financial stability.

Monetary Authority of Singapore — FEAT (Fairness, Ethics, Accountability, Transparency) Principles for the Use of AI and Data Analytics in Singapore's Financial Sector.

Reserve Bank of India — AI governance advisories and model risk management guidance for regulated financial institutions operating in or with India.

U.S. Securities and Exchange Commission — AI-related supervisory activities, disclosure expectations, and enforcement posture in capital markets, 2023–2025.

U.S. Consumer Financial Protection Bureau — Consumer protection expectations in algorithmic decisioning, adverse action explanations, and AI explainability requirements.

U.S. Food and Drug Administration — AI/ML-based Software as a Medical Device guidance; referenced here for its articulation of accountability and auditability expectations in regulated AI, with parallel implications for BFSI.

GPT-4 System Card (OpenAI, 2023), Claude Model Card (Anthropic, 2024), Llama 3 Technical Report (Meta AI, 2024), Mistral 7B (Mistral AI, 2024) — Frontier LLM architectures referenced throughout this analysis.

LangChain, AutoGPT, CrewAI, Haystack — Leading open-source agent orchestration and RAG frameworks evaluated in the context of BFSI production deployment.